Everyone is talking about AI.

Almost no one is ready for what it requires.

The assumption that breaks projects

“If we have cloud, we’re ready for AI.”

Not anymore.

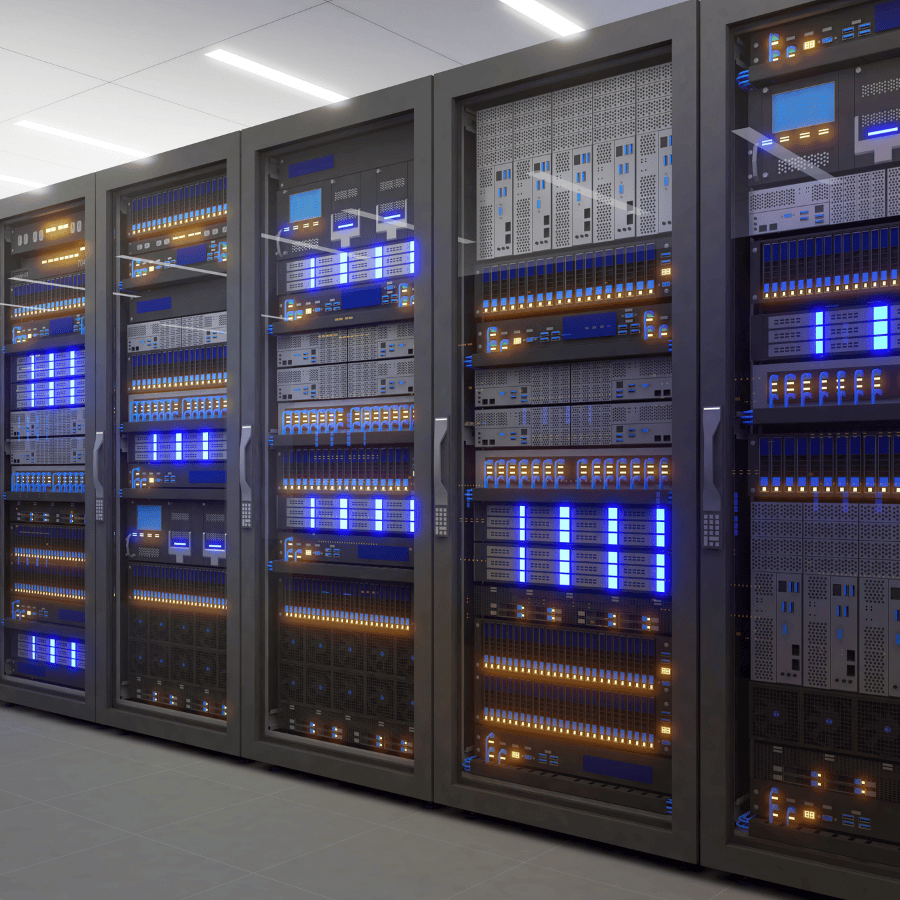

AI is infrastructure-heavy

AI depends on:

- power density

- cooling

- network throughput

- latency

Miss one and performance drops fast.

The real bottleneck: density

Traditional racks:

5–10 kW

AI workloads:

30, 50, even 100 kW+

Most facilities weren’t designed for this.

Cooling is the constraint

Air cooling alone isn’t enough.

Real environments require:

- direct-to-chip cooling

- liquid-assisted systems

Without this:

- throttling

- inefficiency

- instability

Connectivity becomes critical

AI is distributed.

You need:

- high-speed east-west traffic

- low latency

- direct cloud access

Otherwise:

- training slows

- costs rise

The gap in Israel

There’s strong demand.

But limited infrastructure that can support:

- sustained high-density workloads

- production-scale AI environments

What to actually look for

- proven high-density deployments

- cooling in production

- strong interconnection

- real operational experience

Where this is already happening

In Israel, only a small number of operators are already supporting high-density AI workloads in production.

MedOne is one of them, with infrastructure designed for high-density environments, advanced cooling approaches, and direct connectivity to major networks and cloud platforms.

The shift

AI is forcing a new infrastructure standard.

The real question is not “Do you support AI?”

It’s “Can your infrastructure sustain it under real conditions?”